One complaint I hear all the time online and in real life is how complicated infrastructure is. You either commit to a vendor platform like ECS, Lightsail, Elastic Beanstalk or Cloud Run or you go all in with something like Kubernetes. The first are easy to run but lock you in and also sometimes get abandoned by the vendor (looking at you Beanstalk). Kubernetes runs everywhere but it is hard and complicated and has a lot of moving parts.

The assumption seems to be that with containers there should be an easier way to do this. I thought it was an interesting thought experiment. Could I, a random idiot, design a simpler infrastructure? Something you could adopt to any cloud provider without doing a ton of work, that is relatively future proof and that would scale to the point when something more complicated made sense? I have no idea but I thought it could be fun to try.

Fundamentals of Basic Infrastructure

Here are the parameters we're attempting to work within:

- It should require minimal maintenance. You are a small crew trying to get a product out the door and you don't want to waste a ton of time.

- You cannot assume you will detect problems. You lack the security and monitoring infrastructure to truly "audit" the state of the world and need to assume that you won't be able to detect a breach. Anything you put out there has to start as secure as possible and pretty much fix itself.

- Controlling costs is key. You don't have the budget for surprises and massive spikes in CPU usage is likely a problem and not organic growth (or if it is organic growth, you'll want to likely be involved with deciding what to do about it)

- The infrastructure should be relatively portable. We're going to try and keep everything movable without too many expensive parts.

- Perfect uptime isn't the goal. Restarting containers isn't a hitless operation and while there are ways to queue up requests and replay them, we're gonna try to not bite off that level of complexity with the first draft. We're gonna drop some requests on the floor, but I think we can minimize that number.

Basic Setup

You've got your good idea, you've written some code and you have a private repo in GitHub. Great, now you need to get the thing out onto the internet. Let's start with some good tips before we get anywhere near to the internet itself.

- Semantic Versioning is your friend. If you get into the habit now of structuring commits and cutting releases, you are going to reap those benefits down the line. It seems silly right this second when the entirety of the application code fits inside of your head, but soon that won't be the case if you continue to work on it. I really like Release-Please as a tool to cut releases automatically based on commits and let you use the version number to be a meaningful piece of data for you to work off.

- Containers are mandatory. Just don't overthink this and commit early. Don't focus on container disk space usage. Disk space is not our largest concern. We want an easy to work with platform with a minimum amount of surface area for attacks. While Distroless isn't actually....without a linux Distro (I'm not entirely clear why that name was chosen), it is a great place to start. If you can get away with using these, this is what you want to do. Link

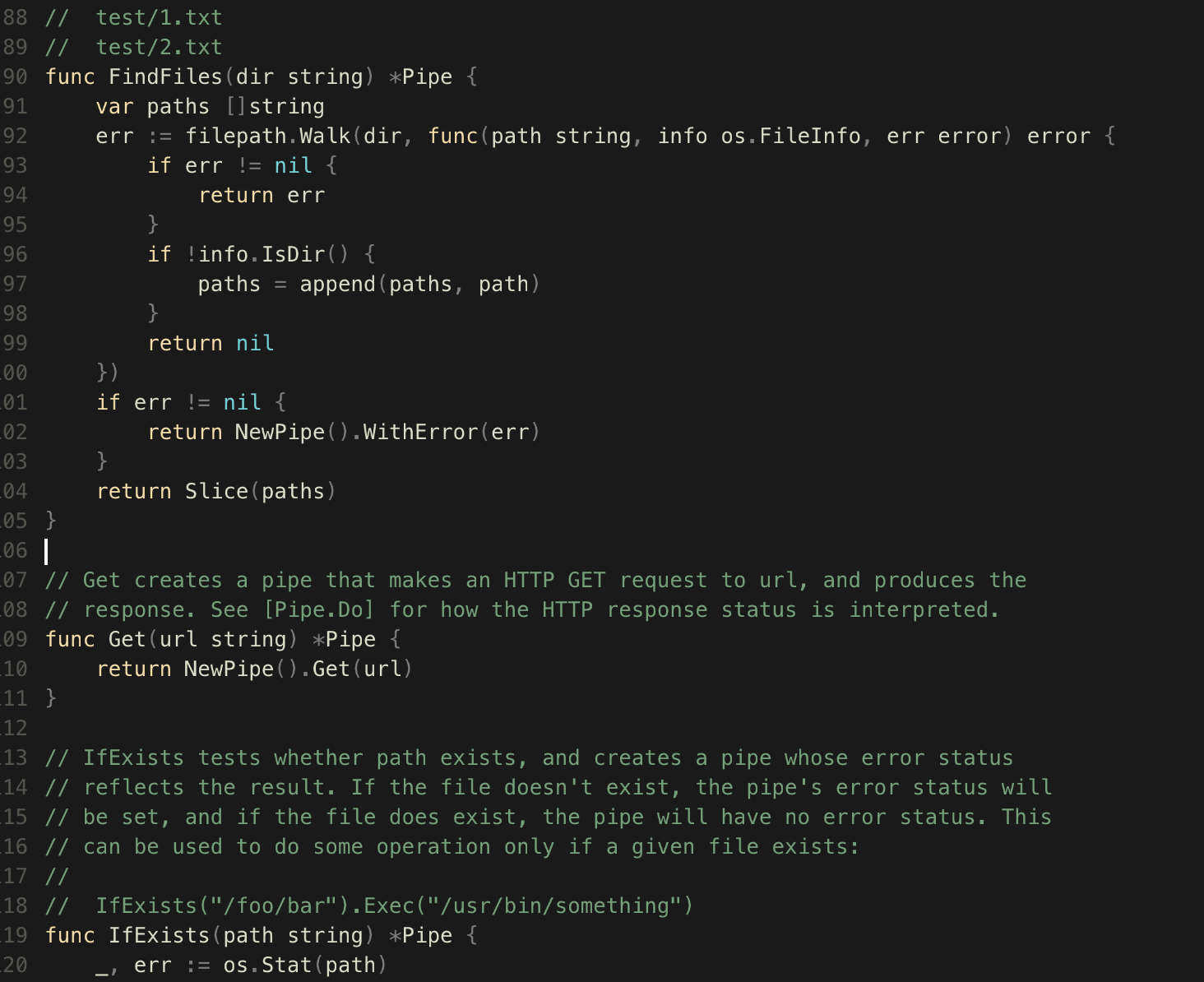

- Be careful about what dependencies you rely on in the early phase. So many jobs I've had there are a few unmaintained packages that are mission critical impossible to remove load-bearing weights around our necks. If you can do it with the standard library great. When you find a dependency on the internet, look at what you need it to do and see "can I just copy paste the 40 lines of code I need from this" vs adding a new dependency forever. Dependency minimization isn't very cool right now but I think especially when starting out it pays off big.

- Healthcheck. You need some route on your app that you can hit which provides a good probability that the application is up and functional. /health or whatever, but this is gonna be pretty key to the rest of this works.

Deployment and Orchestration

Alright so you've made the app, you have some way of tracking major/minor etc. Everything works great on your laptop. How do we put it on the internet.

- You want a way to take a container and deploy it out to a Linux host

- You don't want to patch or maintain the host

- You need to know if the deployment has gone wrong

- Either the deployment should roll back automatically or fail safe waiting for intervention

- The whole thing needs to be as safe as possible.

Is there a lightweight way to do this? Maybe!

Basic Design

Cloudflare -> Autoscaling Group -> 4 instances setup with Cloud init -> Docker Compose with Watchtower -> DBaaS

When we deploy we'll be hitting the IP addresses of the instances on the Watertower HTTP route with curl and telling it to connect to our private container registry and pull down new versions of our application. We shouldn't need to SSH into the boxes ever and when a box dies or needs to be replaced, we can just delete it and run Terraform again to make a new one. SSL will be static long-lived certificates and we should be able to distribute traffic across different cloud providers however we'd like.

Cloudflare as the Glue

I know, a lot of you are rolling your eyes. "This isn't portable at all!" Let me defend my work at bit. We need a WAF, we need SSL, we need DNS, we need a load balancer and we need metrics. I can do all of that with open-source projects, but it's not easy. As I was writing it out, it started to get (actually) quite difficult to do.

Cloudflare is very cheap for what they offer. We aren't using anything here that we couldn't move somewhere else if needed. It scales pretty well, up to 20 origins (which isn't amazing but if you have hit 20 servers serving customer traffic you are ready to move up in complexity). You are free to change the backend CPU as needed (or even experiment with local machines, mix and match datacenter and cloud, etc). You also get a nice dashboard of what is going on without any work. It's a hard value proposition to fight against, especially when almost all of it is free. I also have no ideological dog in the fight of OSS vs SaaS.

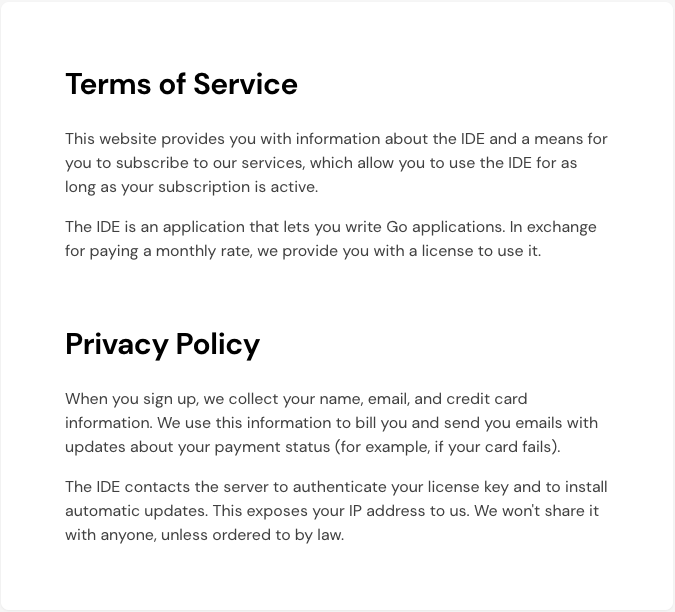

Pricing

Up to 2 origin servers: $5 per month

Additional origins, up to 20: $5 per month per origin

First 500k DNS requests are free

$0.50 per every 500k DNS requests after

Compared to ALB pricing, we can see why this is more idiot proof. There we have 4 dimensions to cost: New connections (per second), Active connections (per minute), Processed bytes (GBs per hour), Rule evaluations (per second). The hourly bill is calculated by taking the maximum LCUs consumed across the four dimensions and we're charged on the highest one. Now ALBs can be much cheaper than Cloudflare, but it's harder to control the cost. If one element starts to explode in price, there isn't a lot you can do to bring it back down.

Cloudflare we're looking at $20 a month and then traffic. So if we get 60,000,000 requests a month we're paying $60 a month in DNS and $20 for the load balancer. For ALB it would largely depend on the type of traffic we're getting and how it is distributed.

BUT there are also much cheaper options. For €7 a month on Hetzner, you can get 25 targets and 20 TB of network traffic. € 1/TB for network traffic above that. So for our same cost we could handle a pretty incredible amount of traffic through Hetzner, but it commits us to them and violates the spirit of this thing. I just wanted to mention it in case someone was getting ready to "actually" me.

Also keep in mind we're just in the "trying ideas out" part of the exercise. Let's define a load balancer.

provider "cloudflare" {

email = "[email protected]"

api_key = "your_api_key"

}

resource "cloudflare_load_balancer" "example_lb" {

name = "example-load-balancer.example.com"

zone_id = "0da42c8d2132a9ddaf714f9e7c920711"

default_pool_ids = [cloudflare_load_balancer_pool.pool1.id, cloudflare_load_balancer_pool.pool2.id]

fallback_pool_id = cloudflare_load_balancer_pool.pool1.id

steering_policy = "random"

session_affinity = "none"

proxied = true

# Add other load balancer settings here from https://registry.terraform.io/providers/cloudflare/cloudflare/latest/docs/resources/load_balancer

}Then we need a monitor.

resource "cloudflare_load_balancer_monitor" "example" {

account_id = "f037e56e89293a057740de681ac9abbe"

type = "http"

expected_body = "alive"

expected_codes = "2xx"

method = "GET"

timeout = 7

path = "/health"

interval = 60

retries = 2

description = "example http load balancer"

header {

header = "Host"

values = ["example.com"]

}

allow_insecure = false

follow_redirects = true

probe_zone = "example.com"

}Finally we need some pools

resource "cloudflare_load_balancer_pool" "pool1" {

account_id = "f037e56e89293a057740de681ac9abbe"

name = "pool1"

monitor = cloudflare_load_balancer_monitor.example.id

origins {

name = "server01"

address = "d9bb:3880:71b0:5fab:e426:8883:5a75:e82e"

enabled = false

header {

header = "Host"

values = ["server01"]

}

}

origins {

name = "server02"

address = "9726:61db:23a9:41d5:7eb0:649a:87b0:4291"

header {

header = "Host"

values = ["server02"]

}

}

description = "example load balancer pool 1"

enabled = false

minimum_origins = 1

notification_email = "[email protected]"

load_shedding {

default_percent = 55

default_policy = "random"

}

origin_steering {

policy = "random"

}

}

resource "cloudflare_load_balancer_pool" "pool2" {

account_id = "f037e56e89293a057740de681ac9abbe"

name = "pool2"

monitor = cloudflare_load_balancer_monitor.example.id

origins {

name = "server03"

address = "3601:03b9:88b7:fa50:8163:818c:eceb:bc14"

enabled = false

header {

header = "Host"

values = ["server03"]

}

}

origins {

name = "server04"

address = "8118:87ef:6b50:099d:fc4a:e66d:a991:5d20"

header {

header = "Host"

values = ["server04"]

}

}

description = "example load balancer pool 2"

enabled = false

minimum_origins = 1

notification_email = "[email protected]"

load_shedding {

default_percent = 55

default_policy = "random"

}

origin_steering {

policy = "random"

}

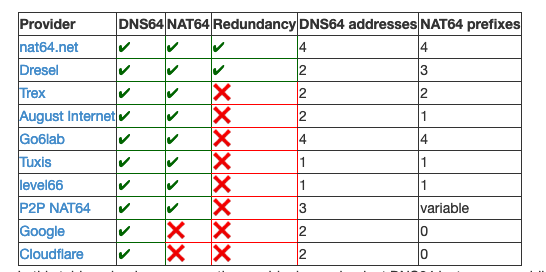

}The addresses are just placeholders, but you'll need to swap values etc. This gives us a nice basic load balancer. Note that we don't have session affinity turned on, so we'll need to add Redis or something to help with state server-side. The IP addresses we point to will need to be reserved on the cloud provider side, but we can use IPv6 so hopefully should save us a few dollars a month there.

How much uptime is enough uptime

So there are two paths here we have to discuss before we get much further.

Path 1

When we deploy to a server, we make an API call to Cloudflare to mark the origin as not enabled. Then we wait for the connections to drain, deploy the container, bring it back up, wait for it to be healthy and then we mark it enabled again. This is traditionally the way we would need to do things, if we were targeting zero downtime.

Now we can do this. We have places later that we could stick such a script. But this is gonna be brittle. We'd basically need to do something like the following.

- Run a GET against https://api.cloudflare.com/client/v4/user/load_balancers/pools

- Take the result, look at the IP addresses, figure out which one is the machine in question and then mark it as not enabled IF all other origins were healthy. We wouldn't want to remove multiple machines at the same time. So we'd then need to hit: https://api.cloudflare.com/client/v4/user/load_balancers/pools/{identifier}/health and confirm the health of the pools.

- But "health" isn't an instant concept. There is a delay between the concept of when the origin is unhealthly and I'll know about it, depending on how often I check and retries. So this isn't a perfect system, but it should work pretty well as long as I add a bit of jitter to it.

I think this exceeds what I want to do for the first pass. We can do it, but it's not consistent with the uptime discussion we had before. This is brittle and is going to require a lot of babysitting to get right.

Path 2

We rely on the healthchecks to steer traffic and assume that our deployments are going to be pretty fast, so while we might drop some traffic on the floor, a user (with our random distribution and server-side sessions) should be able to reload the page and hopefully get past the problem. It might not scale forever but it does remove a lot of our complexity.

Let's go with Path 2 for now.

Server setup + WAF

Alright so we've got the load balancer, it sits on the internet and takes traffic. Fabulous stuff. How do we set up a server? To do it cross-platform we have to use cloud-init.

The basics are pretty straight forward. We're gonna use latest debian and we're gonna update it and restart. Then we're gonna install Docker Compose and then finally stick a few files in there to run this. This is all pretty easy, but we do have a problem we need to tackle first. We need some way to do a level of secrets management so we can write out Terraform and cloud-init files, keep them in version control but also not have the secrets just kinda live there.

SOPS

So typically for secret management we want to use whatever our cloud provider gives us, but since we don't have something like that, we'll need to do something more basic.

We'll use age for encryption which is a great simple encryption library. You can install it here. We run age-keygen -o key.txt which gives us our secret file. Then we need to set an environmental variable with the path to the key like this: SOPS_AGE_KEY_FILE=/Users/mathew.duggan/key.txt

For those unfamiliar with how SOPS (installed here) works, basically you generate the age key as shown above and then you can encrypt files through a CLI or with Terraform locally. So:

secrets.json

{

"username": "admin",

"password": "password"

}Turns into:

{

"username": "ENC[AES256_GCM,data:+bGf/sI=,iv:J47szLfZ5wMWr6Ghc94VAABXs2Ec4Hi+e3ohc2HuF/Q=,tag:XIY1jOgDe9SBDMGxFhLwtw==,type:str]",

"password": "ENC[AES256_GCM,data:RIHz14crqEk=,iv:H3g7/4Bd5vB/6U+Kf+rIR/xBRIGHGoZeN7U1zi5lgsM=,tag:+vD9BXb18rLhpf/sTsvYEA==,type:str]",

"sops": {

"kms": null,

"gcp_kms": null,

"azure_kv": null,

"hc_vault": null,

"age": [

{

"recipient": "age1j6dmaunhspfvh78lgnrtr6zkd7whcypcz6jdwypaydc6gaa79vtq5ryvzf",

"enc": "-----BEGIN AGE ENCRYPTED FILE-----\nYWdlLWVuY3J5cHRpb24ub3JnL3YxCi0+IFgyNTUxOSA1YlcvdkpGc3pBbVFiUnhP\nYVJnalp0WlREVjlQZkFROGtvcWN2VWxsUUJnCmYvZ1ZPd3NzTjZxNHd6MEVNcmI1\nTTBZdnFaSEFSaXZRK28rc01VZGRxWHMKLS0tIGpZUjZCNDFDUnIvYXRJTDhtcGlu\nT3JJWlN1YlJYeU1ueEQ1cytDbDFXQ00K70mBEowf/AGgiFFNj3ocv0NfbI1IMJX/\nMJHMKtXPYJsoSKJla6Y+cXMXPe7LNNorSnmqvkNF7rgEMvONMNoEiA==\n-----END AGE ENCRYPTED FILE-----\n"

}

],

"lastmodified": "2023-10-19T13:06:42Z",

"mac": "ENC[AES256_GCM,data:q8R8Zb+PtpBs6TBPu6VJsQXEKLwi2+WtpE3culIy1obUNdfjWaXyBtC/zbWI5eeh2Z4u//2p49G2bMv0jSzMJZnH4TLIzpHxnd6XFjzu4TqObM6FnI3ZW/SSoPwTRxgHqvooMffm3NO5pxoz3FhnJDHwYk+jTK+JoGxyZF5nBe4=,iv:Ey+so87o/kYbvOaSUXs+vyIrEQXEC39vmswdl0L3Gvw=,tag:5mWJTfBgCFjXVuoYBUiDCA==,type:str]",

"pgp": null,

"unencrypted_suffix": "_unencrypted",

"version": "3.8.1"

}

}By running this: sops --encrypt --age age1j6dmaunhspfvh78lgnrtr6zkd7whcypcz6jdwypaydc6gaa79vtq5ryvzf secrets.json > secrets.enc.json

So we can use this with Terraform pretty easily. We run export SOPS_AGE_KEY_FILE=/Users/mathew.duggan/key.txt just to ensure everything is set and then the Terraform looks like the following:

terraform {

required_providers {

sops = {

source = "carlpett/sops"

version = "~> 0.5"

}

}

}

data "sops_file" "secret" {

source_file = "secrets.enc.json"

}

output "root-value-password" {

# Access the password variable from the map

value = data.sops_file.secret.data["password"]

sensitive = true

}Now you can use SOPS with AWS, GCP, Azure, or use their own secrets system. I present this only as a "we're small and am looking for a way to easily encrypt configuration files".

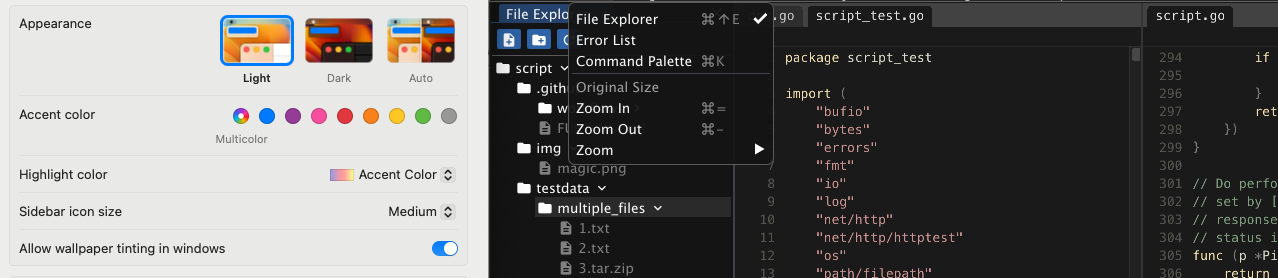

Cloud init

So now we're to the last part of the server setup. We'll need to define a cloud-init YAML to set up the host and we'll need to define a Docker Compose file to set up the application that is going to handle all the pulling of images from here. Now thankfully we should be able to reuse this stuff for the foreseeable future.

#cloud-config

package_update: true

package_upgrade: true

package_reboot_if_required: true

groups:

- docker

users:

- name: admin

lock_passwd: true

shell: /bin/bash

ssh_authorized_keys:

- ${init_ssh_public_key}

groups: docker

sudo: ALL=(ALL) NOPASSWD:ALL

packages:

- apt-transport-https

- ca-certificates

- curl

- gnupg-agent

- software-properties-common

- unattended-upgrades

- nginx

write_files:

- owner: root:root

encoding: b64

path: /etc/ssl/cloudflare.crt

content: |

LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tDQpNSUlHQ2pDQ0EvS2dBd0lCQWdJSVY1RzZsVmJDTG1Fd0RRWUpLb1pJaHZjTkFRRU5CUUF3Z1pBeEN6QUpCZ05WDQpCQVlUQWxWVE1Sa3dGd1lEVlFRS0V4QkRiRzkxWkVac1lYSmxMQ0JKYm1NdU1SUXdFZ1lEVlFRTEV3dFBjbWxuDQphVzRnVUhWc2JERVdNQlFHQTFVRUJ4TU5VMkZ1SUVaeVlXNWphWE5qYnpFVE1CRUdBMVVFQ0JNS1EyRnNhV1p2DQpjbTVwWVRFak1DRUdBMVVFQXhNYWIzSnBaMmx1TFhCMWJHd3VZMnh2ZFdSbWJHRnlaUzV1WlhRd0hoY05NVGt4DQpNREV3TVRnME5UQXdXaGNOTWpreE1UQXhNVGN3TURBd1dqQ0JrREVMTUFrR0ExVUVCaE1DVlZNeEdUQVhCZ05WDQpCQW9URUVOc2IzVmtSbXhoY21Vc0lFbHVZeTR4RkRBU0JnTlZCQXNUQzA5eWFXZHBiaUJRZFd4c01SWXdGQVlEDQpWUVFIRXcxVFlXNGdSbkpoYm1OcGMyTnZNUk13RVFZRFZRUUlFd3BEWVd4cFptOXlibWxoTVNNd0lRWURWUVFEDQpFeHB2Y21sbmFXNHRjSFZzYkM1amJHOTFaR1pzWVhKbExtNWxkRENDQWlJd0RRWUpLb1pJaHZjTkFRRUJCUUFEDQpnZ0lQQURDQ0Fnb0NnZ0lCQU4yeTJ6b2pZZmwwYktmaHAwQUpCRmVWK2pRcWJDdzNzSG12RVB3TG1xRExxeW5JDQo0MnRaWFI1eTkxNFpCOVpyd2JML0s1TzQ2ZXhkL0x1akpuVjJiM2R6Y3g1cnRpUXpzbzB4emxqcWJuYlFUMjBlDQppaHgvV3JGNE9rWkt5ZFp6c2RhSnNXQVB1cGxESDVQN0o4MnEzcmU4OGpRZGdFNWhxanFGWjNjbENHN2x4b0J3DQpoTGFhem0zTkpKbFVmemRrOTdvdVJ2bkZHQXVYZDVjUVZ4OGpZT09lVTYwc1dxbU1lNFFIZE92cHFCOTFiSm9ZDQpRU0tWRmpVZ0hlVHBOOHROcEtKZmI5TEluM3B1bjNiQzlOS05IdFJLTU5YM0tsL3NBUHE3cS9BbG5kdkEyS3czDQpEa3VtMm1IUVVHZHpWSHFjT2dlYTlCR2pMSzJoN1N1WDkzelRXTDAydTc5OWRyNlhrcmFkL1dTaEhjaGZqalJuDQphTDM1bmlKVURyMDJZSnRQZ3hXT2JzcmZPVTYzQjhqdUxVcGhXLzRCT2pqSnlBRzVsOWoxLy9hVUdFaS9zRWU1DQpscVZ2MFA3OFFyeG94UitNTVhpSndRYWI1RkI4VEcvYWM2bVJIZ0Y5Q21rWDkwdWFSaCtPQzA3WGpUZGZTS0dSDQpQcE05aEIyWmhMb2wvbmY4cW1vTGRvRDVIdk9EWnVLdTIrbXVLZVZIWGd3Mi9BNndNN093cmlueFppeUJrNUhoDQpDdmFBREg3UFpwVTZ6L3p2NU5VNUhTdlhpS3RDekZ1RHU0L1pmaTM0UmZIWGVDVWZIQWI0S2ZOUlhKd01zeFVhDQorNFpwU0FYMkc2Um5HVTVtZXVYcFU1L1YrRFFKcC9lNjlYeXlZNlJYRG9NeXdhRUZsSWxYQnFqUlJBMnBBZ01CDQpBQUdqWmpCa01BNEdBMVVkRHdFQi93UUVBd0lCQmpBU0JnTlZIUk1CQWY4RUNEQUdBUUgvQWdFQ01CMEdBMVVkDQpEZ1FXQkJSRFdVc3JhWXVBNFJFemFsZk5Wemphbm4zRjZ6QWZCZ05WSFNNRUdEQVdnQlJEV1VzcmFZdUE0UkV6DQphbGZOVnpqYW5uM0Y2ekFOQmdrcWhraUc5dzBCQVEwRkFBT0NBZ0VBa1ErVDlucWNTbEF1Vy85MERlWW1RT1cxDQpRaHFPb3I1cHNCRUd2eGJOR1YyaGRMSlk4aDZRVXE0OEJDZXZjTUNoZy9MMUNrem5CTkk0MGkzLzZoZURuM0lTDQp6VkV3WEtmMzRwUEZDQUNXVk1aeGJRamtOUlRpSDhpUnVyOUVzYU5RNW9YQ1BKa2h3ZzIrSUZ5b1BBQVlVUm9YDQpWY0k5U0NEVWE0NWNsbVlISi9YWXdWMWljR1ZJOC85YjJKVXFrbG5PVGE1dHVnd0lVaTVzVGZpcE5jSlhIaGd6DQo2QktZRGwwL1VQMGxMS2JzVUVUWGVUR0RpRHB4WllJZ2JjRnJSRERrSEM2QlN2ZFdWRWlINWI5bUgyQk9ONjB6DQowTzBqOEVFS1R3aTlqbmFmVnRaUVhQL0Q4eW9Wb3dkRkRqWGNLa09QRi8xZ0loOXFyRlI2R2RvUFZnQjNTa0xjDQo1dWxCcVphQ0htNTYzanN2V2Iva1hKbmxGeFcrMWJzTzlCREQ2RHdlQmNHZE51cmdtSDYyNXdCWGtzU2REN3kvDQpmYWtrOERhZ2piaktTaFlsUEVGT0FxRWNsaXdqRjQ1ZWFiTDB0MjdNSlY2MU8vakh6SEwzZGtuWGVFNEJEYTJqDQpiQStKYnlKZVVNdFU3S01zeHZ4ODJSbWhxQkVKSkRCQ0ozc2NWcHR2aERNUnJ0cURCVzVKU2h4b0FPY3BGUUdtDQppWVdpY240Nm5QRGpnVFUwYlgxWlBwVHByeVhidmNpVkw1UmtWQnV5WDJudGNPTERQbFpXZ3haQ0JwOTZ4MDdGDQpBbk96S2daazRSelpQTkF4Q1hFUlZ4YWpuL0ZMY09oZ2xWQUtvNUgwYWMrQWl0bFEwaXA1NUQyL21mOG83MnRNDQpmVlE2VnB5akVYZGlJWFdVcS9vPQ0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQ==

- owner: root:root

encoding: b64

path: /etc/ssl/cert.pem

content: |

LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tDQpNSUlFcGpDQ0E0NmdBd0lCQWdJVUgzZXMwaHVaQy8rTUNxQWRyWXEwTE05UFY4QXdEUVlKS29aSWh2Y05BUUVMDQpCUUF3Z1lzeEN6QUpCZ05WQkFZVEFsVlRNUmt3RndZRFZRUUtFeEJEYkc5MVpFWnNZWEpsTENCSmJtTXVNVFF3DQpNZ1lEVlFRTEV5dERiRzkxWkVac1lYSmxJRTl5YVdkcGJpQlRVMHdnUTJWeWRHbG1hV05oZEdVZ1FYVjBhRzl5DQphWFI1TVJZd0ZBWURWUVFIRXcxVFlXNGdSbkpoYm1OcGMyTnZNUk13RVFZRFZRUUlFd3BEWVd4cFptOXlibWxoDQpNQjRYRFRJek1EY3pNVEUzTXprd01Gb1hEVE00TURjeU56RTNNemt3TUZvd1lqRVpNQmNHQTFVRUNoTVFRMnh2DQpkV1JHYkdGeVpTd2dTVzVqTGpFZE1Cc0dBMVVFQ3hNVVEyeHZkV1JHYkdGeVpTQlBjbWxuYVc0Z1EwRXhKakFrDQpCZ05WQkFNVEhVTnNiM1ZrUm14aGNtVWdUM0pwWjJsdUlFTmxjblJwWm1sallYUmxNSUlCSWpBTkJna3Foa2lHDQo5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdmtmbjB1eVZ3LzlSYlBDbDQ2dzhIeVZnTXZKREtVUWgvQUk0DQpIODRXRGRzM1hTRmxrbmFIK0FQdmJoM0Rsc3M5NEZnRDVGVVRMdENzQzRtSFpZVlNiRzJqeCtJbjJGcTdTSjdUDQp1QlJUbHBXWmNyVEViRjRBa00wRm53NGwwbEdQeFlZRjRaOG5uZm13YUtvNnlwb0Ftd3draXJWWXU3dWE4Mm01DQp3eWoyZHZKcWNkUExxTXdHRFVkYnlYemdwZE9IaXRBVFFoTE56VmtaOEI1L2RyODcweDR3TE8rRkVOOG92QUprDQpaNVZCRndSOEI5WEs4dUtEcmdBZkxYUVM5UVZ3WHpjcmQxQVp6S1RDVnBlMmlwemFiSGN5TUt1WDdpZjRTRGQ1DQpiZ2Ird1hycGY2dkNRWklDa3REdWJFcDdCVzlCNVhIUnlmMnJ2Yms2VEtjZ2xTbGNRUUlEQVFBQm80SUJLRENDDQpBU1F3RGdZRFZSMFBBUUgvQkFRREFnV2dNQjBHQTFVZEpRUVdNQlFHQ0NzR0FRVUZCd01DQmdnckJnRUZCUWNEDQpBVEFNQmdOVkhSTUJBZjhFQWpBQU1CMEdBMVVkRGdRV0JCU3pwcWpFOEJUK0FKYUg2c3VnRmwxajdqend4REFmDQpCZ05WSFNNRUdEQVdnQlFrNkZOWFhYdzBRSWVwNjVUYnV1RVdlUHdwcERCQUJnZ3JCZ0VGQlFjQkFRUTBNREl3DQpNQVlJS3dZQkJRVUhNQUdHSkdoMGRIQTZMeTl2WTNOd0xtTnNiM1ZrWm14aGNtVXVZMjl0TDI5eWFXZHBibDlqDQpZVEFwQmdOVkhSRUVJakFnZ2c4cUxtMWhkR1IxWjJkaGJpNWpiMjJDRFcxaGRHUjFaMmRoYmk1amIyMHdPQVlEDQpWUjBmQkRFd0x6QXRvQ3VnS1lZbmFIUjBjRG92TDJOeWJDNWpiRzkxWkdac1lYSmxMbU52YlM5dmNtbG5hVzVmDQpZMkV1WTNKc01BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQ3VvUG9KV05VZ0xPRXVmendLRlprMHBvL2tNR29qDQoxYTdCSGEzcWtNWGUrN2J4aW1pQTBvYzcyVEhYSm8zVm82bTIwaGRpbDRiSzVPYzZoTGpiUTFOR2ZXNm84MXk2DQpyUXZEaXBXN3JuL3R3V3hPTkpHTFNDZDZFalpqWXpUUW5EdFBSQWQrVnBwV1BuNUtLZHRSNkM2ZjhaMFlqeldjDQp3b3JLdkRuV2E5b0gycEUzZUNSRUZsc1lRUUtVNWxOYUpibm9nRXNaY2ZDa0MvU0JCaTRaN0lIRnJzWnd1YTU5DQorVDIxUWNOd3BKbExLZ2VRZlpLazMzTFc5MFlyYjRhNStMaTljQzZsVC9MRHdTc20ySkVVVm1nbDJOaC8wV2dpDQpBcHFxUjV5dmUwdUI2M0tTdW90Z2hyWlp0cnNhVW1OYytjRjhneHU4Si8rdXFhaWZQWk83NVZtVw0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQ==

- owner: root:root

encoding: b64

path: /etc/ssl/key.pem

permissions: '0600'

content: ${private_ssl_key}

- owner: admin:docker

path: /home/admin/docker-compose.yaml

content: |

version: "3"

services:

app:

image: ghcr.io/<org>/<image>:<tag>

restart: unless-stopped

ports:

- "8000:2368"

labels:

- "com.centurylinklabs.watchtower.enable=true"

watchtower:

image: containrrr/watchtower

command: --debug --http-api-update

restart: unless-stopped

environment:

- WATCHTOWER_HTTP_API_TOKEN=${watchtower_token}

labels:

- "com.centurylinklabs.watchtower.enable=false"

ports:

- "8080:8080"

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- /home/admin/.docker/config.json:/config.json

- owner: www-data:www-data

path: /etc/nginx/sites-available/default

content: |

server {

listen 443 ssl http2;

listen [::]:443 ssl http2;

charset UTF-8;

ssl_session_timeout 5m;

ssl_prefer_server_ciphers on;

ssl_ciphers ECDH+AESGCM:ECDH+AES256:ECDH+AES128:DH+3DES:!ADH:!AECDH:!MD5;

ssl_protocols TLSv1.2;

ssl_buffer_size 4k;

ssl_certificate /etc/ssl/cert.pem;

ssl_certificate_key /etc/ssl/key.pem;

ssl_client_certificate /etc/ssl/cloudflare.crt;

ssl_verify_client on;

server_name hostname.com www.hostname.com;

location / {

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header Host $host;

proxy_http_version 1.1;

proxy_buffering on;

proxy_pass http://127.0.0.1:8000;

proxy_redirect off;

}

location /v1/update {

proxy_http_version 1.1;

proxy_buffering on;

proxy_pass http://127.0.0.1:8080;

proxy_redirect off;

}

}

runcmd:

- curl -fsSL https://download.docker.com/linux/debian/gpg | apt-key add -

- add-apt-repository "deb [arch=$(dpkg --print-architecture)] https://download.docker.com/linux/debian $(lsb_release -cs) stable"

- apt-get update -y

- apt-get install -y docker-ce docker-ce-cli containerd.io

- systemctl start docker

- systemctl enable docker

- curl -L "https://github.com/docker/compose/releases/download/2.23.0/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

- chmod +x /usr/local/bin/docker-compose

- su admin -c 'docker login -u ${docker_username} -p ${docker_password} ${docker_repository}'

- su admin -c 'docker compose -f /home/admin/docker-compose.yml up -d'

Now obviously you'll need to modify this and test it, it took some tweaks to get it working on mine and I'm confident there are improvements we could make. However I think we can use it as a sample reference doc with the understanding it is NOT ready to copy and paste.

So here's the basic flow. We're going to use the SSL certificates Cloudflare gives us as well as inserting their certificate for Authenticated Origin Pulls. This ensures all the traffic coming to our server is from Cloudflare. Now we could be traffic from another Cloudflare customer, a malicious one, but at least this gives us a good starting point to limit the traffic. Plus presumably if there is a malicious customer hitting you, at least you can reach out to Cloudflare and they'll do....something.

Now we put it together with Terraform and we have something we can deploy. We'll do Digital Ocean as our example but the cloud provider part doesn't really matter.

secrets.json

{

"private_ssl_key": "LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tDQpNSUlFcGpDQ0E0NmdBd0lCQWdJVUgzZXMwaHVaQy8rTUNxQWRyWXEwTE05UFY4QXdEUVlKS29aSWh2Y05BUUVMDQpCUUF3Z1lzeEN6QUpCZ05WQkFZVEFsVlRNUmt3RndZRFZRUUtFeEJEYkc5MVpFWnNZWEpsTENCSmJtTXVNVFF3DQpNZ1lEVlFRTEV5dERiRzkxWkVac1lYSmxJRTl5YVdkcGJpQlRVMHdnUTJWeWRHbG1hV05oZEdVZ1FYVjBhRzl5DQphWFI1TVJZd0ZBWURWUVFIRXcxVFlXNGdSbkpoYm1OcGMyTnZNUk13RVFZRFZRUUlFd3BEWVd4cFptOXlibWxoDQpNQjRYRFRJek1EY3pNVEUzTXprd01Gb1hEVE00TURjeU56RTNNemt3TUZvd1lqRVpNQmNHQTFVRUNoTVFRMnh2DQpkV1JHYkdGeVpTd2dTVzVqTGpFZE1Cc0dBMVVFQ3hNVVEyeHZkV1JHYkdGeVpTQlBjbWxuYVc0Z1EwRXhKakFrDQpCZ05WQkFNVEhVTnNiM1ZrUm14aGNtVWdUM0pwWjJsdUlFTmxjblJwWm1sallYUmxNSUlCSWpBTkJna3Foa2lHDQo5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdmtmbjB1eVZ3LzlSYlBDbDQ2dzhIeVZnTXZKREtVUWgvQUk0DQpIODRXRGRzM1hTRmxrbmFIK0FQdmJoM0Rsc3M5NEZnRDVGVVRMdENzQzRtSFpZVlNiRzJqeCtJbjJGcTdTSjdUDQp1QlJUbHBXWmNyVEViRjRBa00wRm53NGwwbEdQeFlZRjRaOG5uZm13YUtvNnlwb0Ftd3draXJWWXU3dWE4Mm01DQp3eWoyZHZKcWNkUExxTXdHRFVkYnlYemdwZE9IaXRBVFFoTE56VmtaOEI1L2RyODcweDR3TE8rRkVOOG92QUprDQpaNVZCRndSOEI5WEs4dUtEcmdBZkxYUVM5UVZ3WHpjcmQxQVp6S1RDVnBlMmlwemFiSGN5TUt1WDdpZjRTRGQ1DQpiZ2Ird1hycGY2dkNRWklDa3REdWJFcDdCVzlCNVhIUnlmMnJ2Yms2VEtjZ2xTbGNRUUlEQVFBQm80SUJLRENDDQpBU1F3RGdZRFZSMFBBUUgvQkFRREFnV2dNQjBHQTFVZEpRUVdNQlFHQ0NzR0FRVUZCd01DQmdnckJnRUZCUWNEDQpBVEFNQmdOVkhSTUJBZjhFQWpBQU1CMEdBMVVkRGdRV0JCU3pwcWpFOEJUK0FKYUg2c3VnRmwxajdqend4REFmDQpCZ05WSFNNRUdEQVdnQlFrNkZOWFhYdzBRSWVwNjVUYnV1RVdlUHdwcERCQUJnZ3JCZ0VGQlFjQkFRUTBNREl3DQpNQVlJS3dZQkJRVUhNQUdHSkdoMGRIQTZMeTl2WTNOd0xtTnNiM1ZrWm14aGNtVXVZMjl0TDI5eWFXZHBibDlqDQpZVEFwQmdOVkhSRUVJakFnZ2c4cUxtMWhkR1IxWjJkaGJpNWpiMjJDRFcxaGRHUjFaMmRoYmk1amIyMHdPQVlEDQpWUjBmQkRFd0x6QXRvQ3VnS1lZbmFIUjBjRG92TDJOeWJDNWpiRzkxWkdac1lYSmxMbU52YlM5dmNtbG5hVzVmDQpZMkV1WTNKc01BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQ3VvUG9KV05VZ0xPRXVmendLRlprMHBvL2tNR29qDQoxYTdCSGEzcWtNWGUrN2J4aW1pQTBvYzcyVEhYSm8zVm82bTIwaGRpbDRiSzVPYzZoTGpiUTFOR2ZXNm84MXk2DQpyUXZEaXBXN3JuL3R3V3hPTkpHTFNDZDZFalpqWXpUUW5EdFBSQWQrVnBwV1BuNUtLZHRSNkM2ZjhaMFlqeldjDQp3b3JLdkRuV2E5b0gycEUzZUNSRUZsc1lRUUtVNWxOYUpibm9nRXNaY2ZDa0MvU0JCaTRaN0lIRnJzWnd1YTU5DQorVDIxUWNOd3BKbExLZ2VRZlpLazMzTFc5MFlyYjRhNStMaTljQzZsVC9MRHdTc20ySkVVVm1nbDJOaC8wV2dpDQpBcHFxUjV5dmUwdUI2M0tTdW90Z2hyWlp0cnNhVW1OYytjRjhneHU4Si8rdXFhaWZQWk83NVZtVw0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQ",

"watchtower_token": "tx#okr#n+8_wpf%#n9cxr@30vi7wy_@*@69bw+smfic&k^zb8h",

"docker_username": "username",

"docker_password": "password",

"docker_repository": "repository"

}Terraform file

terraform {

required_providers {

digitalocean = {

source = "digitalocean/digitalocean"

version = "2.30.0"

}

sops = {

source = "carlpett/sops"

version = "~> 0.5"

}

cloudflare = {

source = "cloudflare/cloudflare"

version = "4.17.0"

}

}

}

variable "ssh_public_key" {

type = string

description = "SSH public key"

default = "ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCeogciUcb1roDZWVXaTFrMSqU66qlb4YT2GhDMZQm+cM6kxAgl5GY72Yiuir/Sml8pHMvTRPV5ezg+17gSntnBtIbf3wNwuB0F/21l7vGS2XteY6p557cRHZjSFuc2uPiysnI21FfZCrsEJ7uM3Ebyd/zJ394URcWQm54NtVh/QxuHzfuK9QCbxhlsXXFAfTnrWvLVGQkq/R+fjtKy12o42Y59JIsZT4aORSGujDiagBysGOCXonYqRhs9gmdZPkcKUe3r8j6fZRY2l8/QX3D6zhDZ8x74Gi70ojuvR8oCsWs9tB2sF/XQi806G/s/mbhh6hcj7ALyo5Th+jw7I8rj matdevdug@matdevdug-ThinkPad-X1-Carbon-5th"

}

provider "digitalocean" {

token = "secret_api_key"

}

data "sops_file" "secret" {

source_file = "secrets.enc.json"

}

locals {

virtual_machines = {

"server01" = { vm_size = "s-4vcpu-8gb", zone = "nyc1" },

"server02" = { vm_size = "s-4vcpu-8gb", zone = "nyc1" },

"server03" = { vm_size = "s-4vcpu-8gb", zone = "nyc1" },

"server04" = { vm_size = "s-4vcpu-8gb", zone = "nyc1" }

}

}

resource "digitalocean_droplet" "web" {

for_each = local.virtual_machines

name = each.key

image = "debian-12-x64"

size = each.value.vm_size

region = each.value.zone

user_data = templatefile("${path.module}/cloud-init.yaml", {

init_ssh_public_key = file(var.ssh_public_key)

private_ssl_key = data.sops_file.secret.data["private_ssl_key"]

watchtower_token = data.sops_file.secret.data["watchtower_token"]

docker_username = data.sops_file.secret.data["docker_username"]

docker_password = data.sops_file.secret.data["docker_password"]

docker_repository = data.sops_file.secret.data["docker_repository"]

})

}

resource "digitalocean_reserved_ip" "example" {

for_each = digitalocean_droplet.web

droplet_id = each.value.id

region = each.value.region

}Hooking it all together

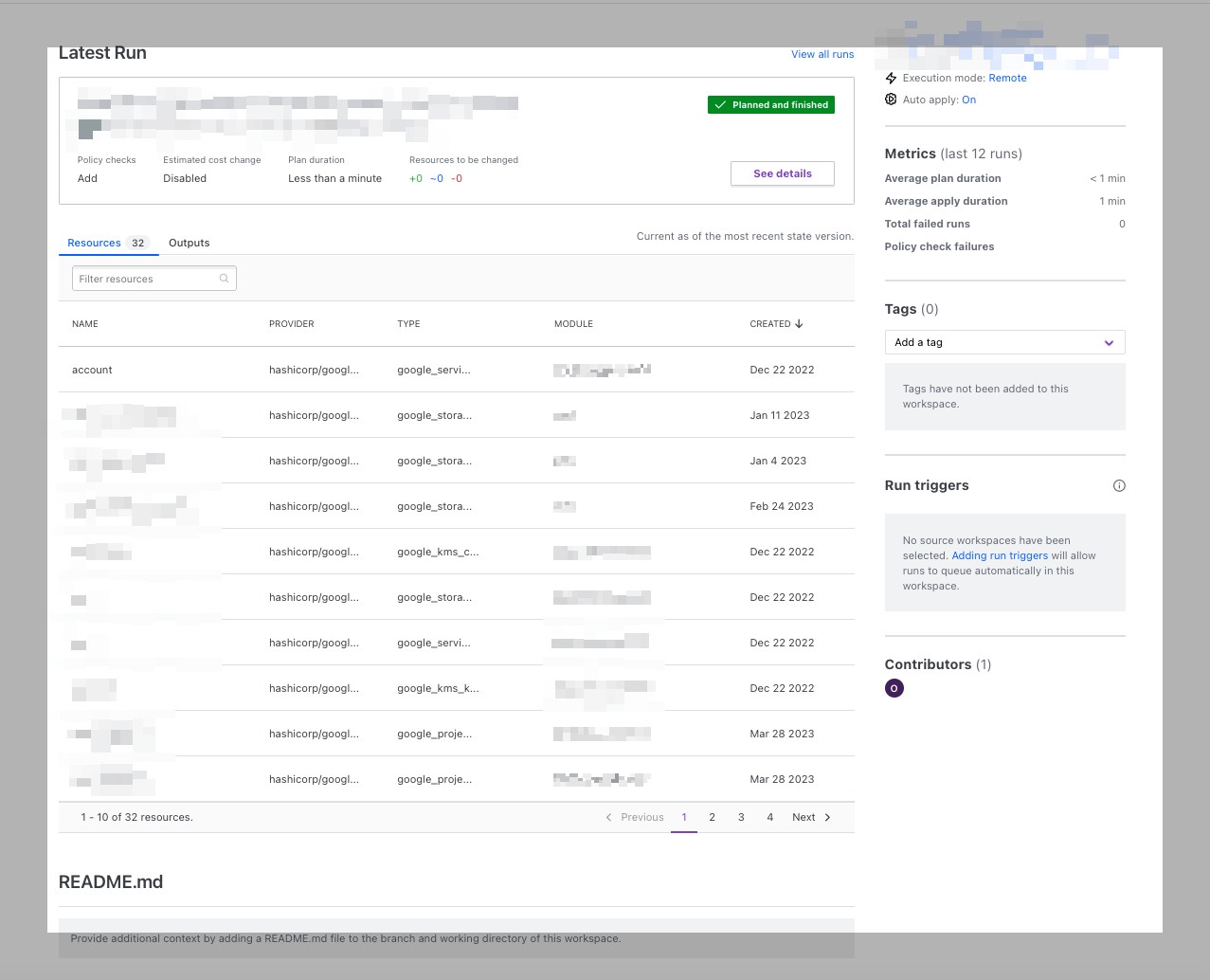

So we'll need to go back to the Cloudflare terraform and set the reserved_ips we get from the cloud provider as the IPs for the origins. Then we should be able to go through, set Authenticated Origin Pulls up as well as SSL to "Strict" in the Cloudflare control panel. Finally since we have Watchtower set up, all we need to deploy a new version of the application is to write a simple deploy script that curls each one of our servers IP addresses with the Watchtower HTTP Token set to tell it to pull a new version of our container from our registry and deploy it. Read more about that here.

In my testing (which was somewhat limited), even though the scripts needed tweaks and modifications, the underlying concept actually worked pretty well. I was able to see all my traffic coming through Cloudflare easily, the SSL components all worked and whenever I wanted to upgrade a host it was pretty simple to stop traffic to the host in the web UI, reboot or destroy and run Terraform again and then send traffic to it again.

In terms of encryption while my age solution wasn't perfect I think it'll hold together reasonably well. It is a secret value which you can safely commit to source control and rotate the secret pretty easily whenever you want.

Next Steps

- Put the whole thing together in a structured Terraform module so it's more reliable and less prone to random breakage

- Write out a bunch of different cloud provider options to make it easier to switch between them

- Write a simple CLI to remove an origin from the load balancer before running the deploy and then confirming the origin is healthy before sticking it back in (for the requirement of zero-downtime deployments)

- Taking a second pass at the encryption story.

Going through this is a useful exercise in explaining why these infrastructure products are so complicated. They're complicated because its hard to do and has a lot of moving parts. Even with the heavy use of existing tooling, this thing turned out to be more complicated than I expected.

Hopefully this has been an interesting thought experiment. I'm excited to take another pass at this idea and potentially turn it into a more usable product. If this was helpful (or if I missed something based), I'm always open to feedback. Especially if you thought of an optimization! https://c.im/@matdevdug