I like the Internet. I am old enough to remember the pre-Internet era and despite the younger generations pining for those simpler days, I was there. Paper maps were absolutely horrible, just you and a compass in your car on the side of the road in the middle of the night trying to figure out where you are and where you are going. Once when driving from Michigan to Florida I got so lost in the middle of the night in Kentucky that I had to pull over to sleep and wait for the sun so I could figure out where I was. I awoke to an old man staring unblinkingly into my car, shirtless, breathing heavy enough to fog the windows. To say I floored that 1991 Honda Civic is an understatement.

You would leave your house and then just disappear. This is presented as kind of romantic now, as if we were just free spirits on the wind and could stop and really watch a sunset. In practice it was mostly an annoying game of attempting to guess where people were. You'd call their job, they had left. You'd call their house, they weren't home yet. Presumably they were in transit but you actually had no idea. As a child my response to people asking me where my parents were was often a shrug as I resumed attempting to eat my weight in shoplifted candy or make homemade napalm with gasoline and styrofoam. Sometimes I shudder as a parent remembering how young I was putting pennies on train tracks and hiding dangerously close so that we could get the cool squished penny afterwards.

Cassettes are the worst way to listen to music ever invented. Tapes squealed. Tapes slowed down for no reason, like they were depressed. Multiple times in my life I would set off on a long road trip, pop in a tape, and within fifteen minutes watch as it shot from the deck unspooled like the guts from the tauntaun in Star Wars. You'd then spend forty-five minutes at a Sunoco trying to wind it back in with a Bic pen knowing in your heart you were performing CPR on a corpse. Then you'd put it back in the player out of pure stubbornness, and it would chew itself again immediately, and you'd drive the next six hours in silence with your own thoughts, which were not as good as Pearl Jam.

So I am, mostly, grateful for the bounty the internet has provided. But there is something wrong, deeply wrong, with what we built. The wrongness was there at the start. It was baked into the foundation by people who told themselves a story about freedom, and that story was a lie, and we are all, every one of us, paying their tab.

To understand what happened we need to go back to the 90s.

A Declaration of the Independence of Cyberspace

One of the first and most classic examples of the ideology that powered and continues to power tech is the classic "A Declaration of the Independence of Cyberspace" by John Perry Barlow written in 1996. You can find the full text here. I remember thinking it was genius when I first read it. I was young enough that I also thought "Snow Crash" was a serious political document. Today the Declaration reads like one of those sovereign citizen TikToks where someone in traffic court is claiming diplomatic immunity under maritime law.

It helps to know who Barlow was. Barlow was a Grateful Dead lyricist. He was also a Wyoming cattle rancher. He was also, briefly, the campaign manager for Dick Cheney's first run for Congress. (You did not misread that.) He spent his later years as a fixture at Davos, the World Economic Forum, where the very wealthy gather each January to remind each other that they are interesting. It was at Davos, in February 1996, fueled by champagne and grievance over the Telecommunications Act, that Barlow banged out the Declaration on a laptop and emailed it to a few hundred friends. From there it became, somehow, one of the founding documents of the modern internet.

These increasingly hostile and colonial measures place us in the same position as those previous lovers of freedom and self-determination who had to reject the authorities of distant, uninformed powers. We must declare our virtual selves immune to your sovereignty, even as we continue to consent to your rule over our bodies. We will spread ourselves across the Planet so that no one can arrest our thoughts.

Many of the pillars of "modern Internet" are here. Identity isn't a fixed concept based on government ID but is a more fluid concept. We don't need centralized control or really any form of control because those things are unnecessary. It was this and the famous earlier "Cyberspace and the American Dream: A Magna

Carta for the Knowledge Age" that laid a familiar foundation for a lot of the culture we now have. [link]

The Magna Carta is also our introduction to the (now familiar) creed of "catch up or get left behind". The adoption of new technology must be done at the absolute fastest speed possible with no regulations or checks. You don't need to worry about the consequences of technology because these problems correct themselves. If you told me the following was written two weeks ago by OpenAI I would have believed you.

If this analysis is correct, copyright and patent protection of knowledge (or at least many forms of it) may no longer be unnecessary. In fact, the marketplace may already be creating vehicles to compensate creators of customized knowledge outside the cumbersome copyright/patent process

The cumbersome copyright/patent process. Cumbersome to whom, exactly? This is always the move. The thing your industry would prefer not to deal with is reframed as an obsolete burden. Your refusal to do it is rebranded as innovation. Your inability to imagine a world where you don't get exactly what you want becomes a manifesto.

Winner Saw It Coming

So there are dozens of these pieces and they all read the same. If you don't regulate these technologies humanity will only benefit. Education, healthcare, industry, etc. We don't need regulations because the transformation from the medium of paper to digital has transformed the human spirit. But one was extremely surprising to me. Langdon Winner wrote something almost prophetic back in 1997. You can read it here.

He coins the term cyberlibertarianism (or at least is the first mention of it I could find) and then goes on to describe an almost eerily accurate set of events.

In this perspective, the dynamism of digital technology is our true destiny. There is no time to pause, reflect or ask for more influence in shaping these developments. Enormous feats of quick adaptation are required of all of us just to respond to the

requirements the new technology casts upon us each day. In the writings of cyberlibertarians those able to rise to the challenge are the champions of the coming millennium. The rest are fated to languish in the dust.

Characteristic of this way of thinking is a tendency to conflate

the activities of freedom seeking individuals with the operations

of enormous, profit seeking business firms. In the Magna Carta

for the Knowledge Age, concepts of rights, freedoms, access, and

ownership justified as appropriate to individuals are marshaled

to support the machinations of enormous transnational firms.

We must recognize, the manifesto argues, that "Government does

not own cyberspace, the people do." One might read this as a

suggestion that cyberspace is a commons in which people have

shared rights and responsibilities. But that is definitely not where

the writers carry their reasoning.

What "ownership by the people" means, the Magna Carta

insists, is simply "private ownership." And it eventually becomes

clear that the private entities they have in mind are actually large,

transnational business firms, especially those in communications.

Thus, after praising the market competition as the pathway to a

better society, the authors announce that some forms of compe-

tition are distinctly unwelcome. In fact, the writers fear that the

government will regulate in a way that requires cable companies

and phone companies to compete. Needed instead, they argue,

is the reduction of barriers to collaboration of already large firms,

a step that will encourage the creation of a huge, commercial,

interactive multimedia network as the formerly separate kinds of

communication merge.

In all he lays out 4 pillars of this ideology.

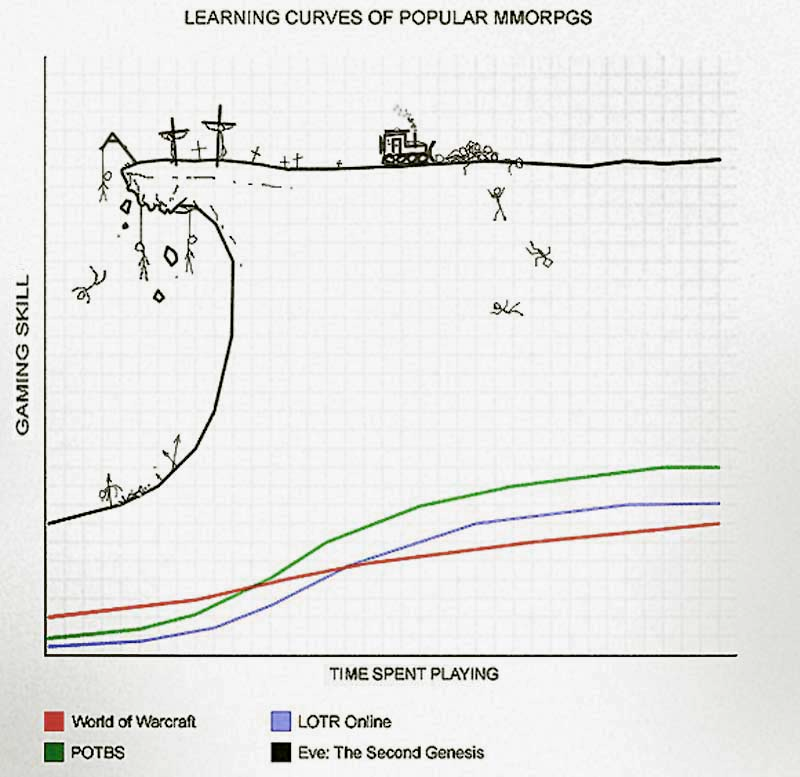

Technological determinism. The new technology is going to transform everything, it cannot be stopped, and your only job is to keep up. Stewart Brand's actual quote, which Winner pulls out and lets sit there like a body on display, is "Technology is rapidly accelerating and you have to keep up." There's no room to ask whether we want any of this. The wave is coming. Surf or drown.

It does not occur to anyone in this discourse that 'drown' is a choice the wave is making, not a natural law. Waves do not have intentions. Destroying your livelihood and leaving you to rot isn't a requirement of the natural order as much as that would convenient.

Radical individualism. The point of all this technology is personal liberation. Anything that gets in the way of the individual maximizing themselves be it government, regulation, social obligation, your annoying neighbors, is an obstacle to be removed. Winner notes, with what I imagine was a very dry expression, that the writers of the "Magna Carta for the Knowledge Age" cited Ayn Rand approvingly. In 1994. As intellectual grounding. For a document about computers.

There is something deeply funny about a movement claiming to invent the future and grounding its case in a Russian émigré's airport novels about steel barons in love with their own reflections.

Free-market absolutism. Specifically the Milton Friedman, Chicago School, supply-side flavor. The market will sort it out. Regulation is theft. Wealth is virtue. George Gilder, who co-wrote the Magna Carta, had previously written a book called Wealth and Poverty that helped sell Reaganomics to the masses. He then wrote Microcosm, which argued that microprocessors plus deregulated capitalism would liberate humanity. He was very serious about this.

Don't worry, Gilder is still out there. He loves the blockchain and crypto now. He now writes about how Bitcoin will save the soul of capitalism, which it is somehow doing while also destroying the planet. Both can be true in his cosmology. The ideology is flexible like that.

A fantasy of communitarian outcomes. This is the part that should make you laugh out loud. After establishing that government is bad, regulation is theft, and the individual is sovereign, the cyberlibertarians then promise that the result of all this will be... rich, decentralized, harmonious community life. Negroponte: "It can flatten organizations, globalize society, decentralize control, and help harmonize people." Democracy will flourish. The gap between rich and poor will close. The lion will lie down with the lamb, and the lamb will have a Pentium II.

We also have the advantage of hindsight and know, without question, that all of these predicted outcomes were wrong. Not 'directionally wrong' or 'wrong in the details.' Wrong the way it would be wrong to predict that if you set your kitchen on fire, the result will be a renovation.

You have to hold these four ideas in your head at the same time to see the trick. The cyberlibertarians wanted you to believe that radical individualism plus deregulated capitalism plus inevitable technology would produce communitarian utopia. This is, on its face, insane. It is the economic equivalent of claiming that if everyone punches each other really hard, eventually we'll all be hugging.

But Winner's sharpest observation, the one I keep coming back to, isn't about any of the four pillars individually. It's about the move underneath them. He writes:

"Characteristic of this way of thinking is a tendency to conflate the activities of freedom seeking individuals with the operations of enormous, profit seeking business firms."

This is the entire game. This is how "don't tread on me" becomes "Meta should be allowed to do whatever it wants." This is how the rights of the lone hacker working in their garage become indistinguishable from the rights of a multinational with a market cap larger than most countries' GDP. The Magna Carta literally argues that the government should reduce barriers to collaboration between cable companies and phone companies in the name of individual freedom and social equality. Winner caught this in 1997.

That is why obstructing such collaboration – in the cause of forcing a competition

between the cable and phone industries – is socially elitist. To the extent it prevents collaboration between the cable industry and the phone companies, present federal policy actually thwarts the Administration's own goals of access and empowerment.

What makes the essay uncomfortable to read now is that Winner wasn't even predicting the future. He was just describing what was already happening and noting where it would obviously lead. He saw the media mergers and asked the question nobody in the industry wanted to answer: what happened to the predicted collapse of large centralized structures in the age of electronic media? Where, exactly, did the decentralization go? He saw that the cyberlibertarians were going to deliver the opposite of everything they promised, and that they were going to keep getting paid to promise it anyway.

He was writing before Google. Before Facebook. Before the iPhone. Before YouTube. Before Twitter, Bitcoin, Uber, AirBnB, OpenAI, and the entire app economy. Before any of the actual examples that would eventually prove him right existed. He just looked at the people doing the talking, listened to what they were saying, and wrote down where it ended. It is not a long essay. He didn't need a long essay. The future was right there on the page, in their own words. He just had to read it back to them.

The essay closes with a question that has, to my knowledge, never been seriously answered by the industry it was aimed at:

"Are the practices, relationships and institutions affected by people's involvement with networked computing ones we wish to foster? Or are they ones we must try to modify or even oppose?"

Twenty-eight years later, the industry still treats this question as somewhere between naive and seditious. It's the question Barlow's declaration was specifically designed to make unaskable. And it remains, to this day, the only question that actually matters.

Caveat emptor

When you look at these early formative writings, so much of what we see now becomes clear. The cyberlibertarian deal was always the same: you're on your own. The industry would build the infrastructure, take the profits, and shove every consequence, every harm, every cost, every responsibility, onto somebody else.

There is no greater example to me than the moderator. Anyone who has ever moderated a forum or a subreddit knows that adding the word "cyber" to a space doesn't suddenly turn people into better humans. People are still people. They flame each other, they post slurs, they doxx, they harass, they spam, they post CSAM, they radicalize each other, they grief, they coordinate, they lie. A space with humans in it requires governance.

They produce, with frightening regularity, the exact behavior any kindergarten teacher could have predicted. Then they act surprised.

But the cyberlibertarian model required pretending it was unforeseeable. The platforms couldn't acknowledge that they needed governance because acknowledging it would mean acknowledging responsibility, and acknowledging responsibility would mean acknowledging liability, and acknowledging liability would mean the entire economic model collapses. So instead the industry invented a beautiful fiction: governance happens, but it happens by magic, performed by volunteers, for free, who we will simultaneously rely on and mock.

Reddit is run by unpaid moderators. Wikipedia is run by unpaid editors. Stack Overflow was run by unpaid experts and is now a ghost town. On TikTok and Twitter it is the unknowable "algorithm" that is the cause of and solution to every problem backed by capricious moderators who delight in stopping free speech. Unless you don't like it, then it's negligence moderation in defense of your enemies.

Open source is run by unpaid maintainers having nervous breakdowns. The platforms collect the rent. The people doing the actual work of making the platforms livable get nothing, and when they ask for anything like recognition, tools, basic protection from harassment, they're told they're power-tripping nerds who should touch grass.

This is also the crypto story, just with the masks off. What if we made worse money on purpose, money that bypassed every protection consumers had won over the previous century, money that couldn't be reversed when stolen, money that funded ransomware attacks on hospitals and pump-and-dumps targeting people's retirement accounts? The cyberlibertarian answer was: that's freedom. The losses were real. People killed themselves. Hospitals had to turn away patients. The architects became billionaires and bought yachts and now sit on the boards of AI companies, where they are reinventing the same con with a new vocabulary.

Now Winner got one thing wrong, and it's worth pausing on, because it's the most interesting wrinkle in all of this. What actually happened was weirder and worse. The cyberlibertarians became the corporations. They didn't sell out. They didn't betray their principles for the first offer of money. They simply scaled until their principles became inconvenient, and then they stopped mentioning them.

Once the platforms got large enough to be unstoppable, once they captured enough of the regulatory apparatus to write their own rules, the libertarian rhetoric got quietly shelved like a college poster you took down before your in-laws came over. Meta no longer pretends it stands for free speech and seemingly takes delight in putting its thumb on the scale. TikTok users have invented an entire euphemistic shadow language to evade automated censorship like "unalive," "le dollar bean," "graped" that would have made 1996 Barlow weep into his bolo tie.

Copyright and patents matter when they're Apple's copyright and patents. Or Googles. Or OpenAIs. Go try to make a Facebook+ website and see how quickly Meta is capable of responding to content it finds objectionable.

Cyberlibertarianism was the ladder. Once they were on the roof, they kicked it away and started charging admission to look at the view.

So the Internet is Doomed?

Remember I like the Internet. I said it in the beginning and it is still true. I love the Fediverse, I love weird Discords about small tabletop RPGs I'm in. I spend hours in the Mister FPGA forums. There are corners that are good. But they're mostly good because they're not big enough to be worth breaking up.

It feels increasingly like I'm hanging out in the old neighborhood dive bar after most of the regulars have moved away. The lighting is the same. The bartender remembers your order. But you can hear yourself think now, and that's mostly because the room is half empty and the jukebox finally died. The new clientele is from out of town. They are taking pictures of the menu.

If we want to have a serious conversation about why we are in the situation we're in, it is no longer possible to pretend that the broken ideology that put us on this trajectory is still somehow compatible with the harsh realities that surround us. It is not clear to me if democracy can survive a deregulated Internet. A deregulated Internet filled with LLMs that can perfectly impersonate human beings powered by unregulated corporations with zero ethical guidelines seems like a somewhat obvious problem. Like an episode of Star Trek where you the viewer are like "well clearly the Zorkians can't keep the Killbots as pets." It doesn't take some giant intellect to see the pretty fucking obvious problem.

If we want to save the parts of the internet worth saving, we have to evolve. We have to find some sort of ethical code that says: just because I can do something and it makes money, that is not sufficient justification to unleash it on the world. Or, more simply: just because I want to do something and you cannot actively stop me, that does not make doing it a good idea. We have waited thirty years for the cyberlibertarian future to arrive and produce the promised harmonious community. It's time to face the facts. It's never coming. The bus left in 1996. The bus was never real.

People did not get better because they went online. Giving everyone access to a raw, unfiltered pipeline of every fact and lie ever produced did not turn them into better-educated people. It broke them. It allowed them to choose the reality they now inhabit, like ordering off a menu. If I want to believe the world is flat, TikTok will gladly serve me that content all day. Meta will recommend supportive groups. There will be hashtags. There will be Discords. There will be a guy named Trent who runs a podcast. I will never have to face the deeply uncomfortable possibility that I might be wrong about anything, ever, until the day I die, surrounded by people who agree with me about everything, including which of the other mourners are secretly lizards.

That is the internet we built. It was not an accident. It was the product of a specific ideology, written down by specific people, at a specific cocktail party in Davos, in 1996. Winner watched it happen and told us where it was going. We did not listen. There is still time, maybe, to start.